Automated BIM Generation from LiDAR Point Clouds

Alexandr Migachev, Head of Delivery

March 17, 2026

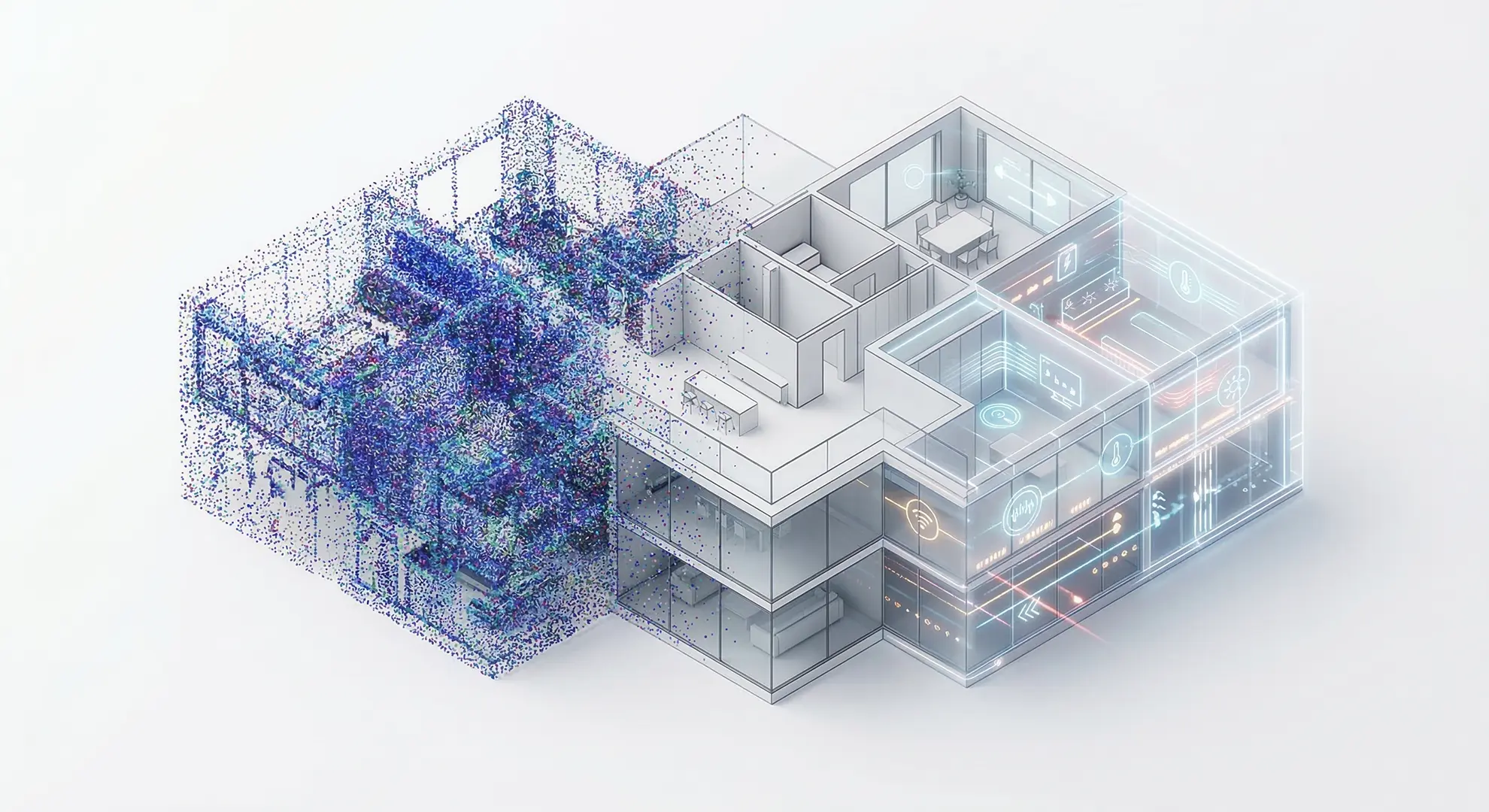

A Machine Learning Pipeline for Scalable Digital Twin Creation

As the demand for high-fidelity spatial data grows, the bottleneck in the AEC industry has shifted from data collection to data processing. Achieving automated BIM generation from LiDAR point clouds is now a critical milestone for firms looking to scale their operations. By leveraging advanced machine learning, Timspark has developed a pipeline that transforms raw laser scans into structured, BIM-ready architectural models with unprecedented speed.

Key Outcomes

-

- Automation Level: 70–80% automated extraction of building elements.

- Model Accuracy: mIoU of 0.7631 across key architectural classes.

- Compatibility: Full Revit, AutoCAD, and BIM 360 integration.

- Scalability: Cloud-native deployment on AWS and Azure.

Executive Overview

Digital twin platforms are rapidly becoming essential infrastructure for modern buildings, enabling indoor navigation, facility management, safety planning, and operational analytics. However, the process of creating digital twins still relies heavily on manual reconstruction of buildings from LiDAR scans.

A geospatial technology company approached our team to solve this bottleneck. Their workflow relied on mobile LiDAR scanning systems that produced dense point clouds of indoor environments, containing millions of spatial measurements. Before these scans could be used in their digital twin platform, the data had to be converted into structured BIM models.

This conversion required specialists to manually reconstruct architectural elements, including walls, doors, windows, floors, and structural components. For large buildings, the process could take days or even weeks, limiting the scalability of the client’s operations.

Our goal was to design a machine learning system capable of automatically converting point clouds into BIM-ready architectural structures. The client did not require full automation; a solution that could automate up to 70–80% of building element extraction would already deliver significant operational benefits.

The resulting solution combines deep learning-based 3D scene understanding with geometric reconstruction algorithms, enabling automated generation of structured BIM models directly from LiDAR scans.

The Challenge

LiDAR scanners capture buildings with extraordinary precision, but the resulting point clouds are inherently unstructured. A typical indoor scan may contain tens or hundreds of millions of points representing surfaces throughout the building.

Although this data accurately represents the physical environment, it lacks semantic meaning. A point cloud does not explicitly describe which points represent walls, doors, columns, or mechanical systems. Human engineers must interpret this structure and manually recreate the building inside BIM software.

This manual step represents one of the largest bottlenecks in the production of digital twins. The challenge was therefore not simply processing large volumes of 3D data, but teaching machines to understand architectural structure within that data.

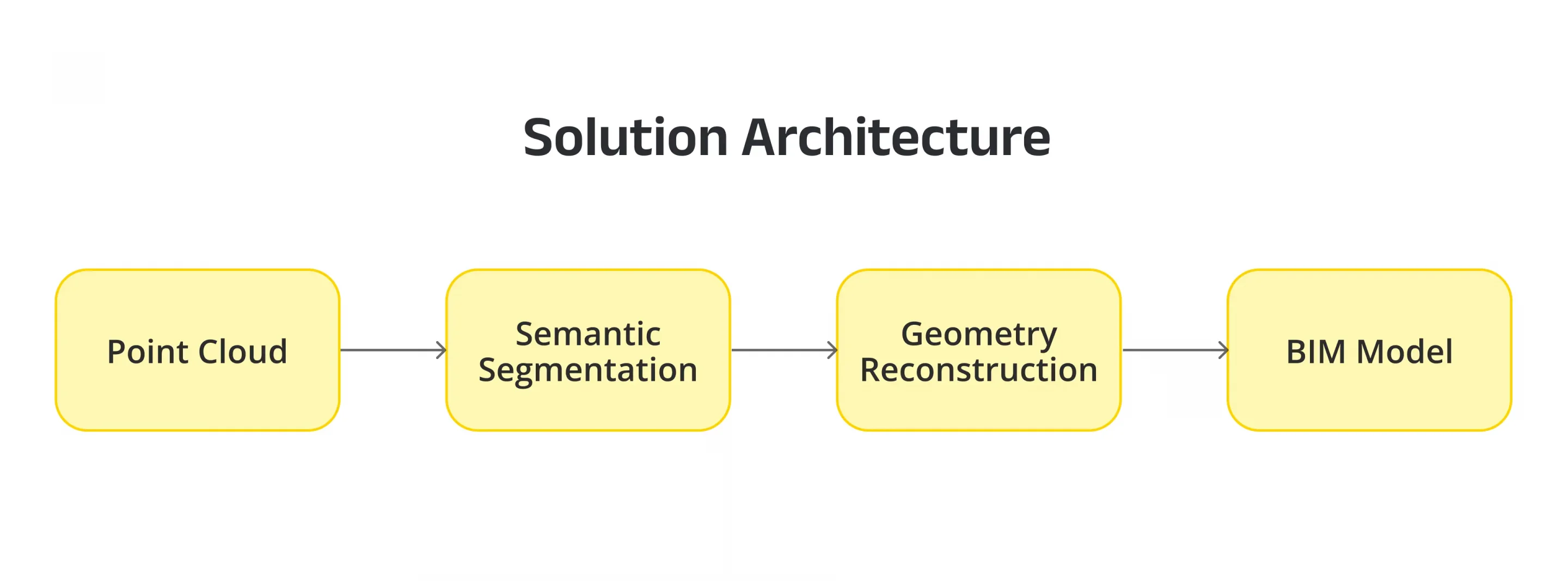

Solution Architecture: How LiDAR Point Clouds are Converted into BIM

The system we designed follows a multi-stage pipeline that converts raw spatial data into structured building models.

Stage 1: Point Cloud Preprocessing

The incoming point cloud is first standardized to ensure consistent processing by machine learning models. This stage includes coordinate normalization, noise filtering, density balancing, and spatial partitioning of large point clouds into manageable segments suitable for GPU processing.

Partitioning is particularly important because indoor scans often contain hundreds of millions of points, requiring efficient memory management during model inference.

This stage was implemented using Python-based data processing pipelines, leveraging OpenCV for preprocessing utilities and PCL (Point Cloud Library) for point cloud filtering, geometric transformations, and spatial data manipulation.

Stage 2: Semantic Segmentation for Point Cloud to BIM Conversion

The core of the system is a deep learning model designed for 3D semantic segmentation. The model analyzes the spatial structure of the point cloud and classifies each point according to its architectural role.

Typical classes include:

– walls

– floors

– roofs

– doors

– windows

– structural columns

– pipes and mechanical elements

– equipment and furniture

To determine the most suitable architecture, multiple state-of-the-art 3D models were evaluated during the research phase. Candidate models included transformer-based architectures and sparse convolutional neural networks commonly used for large-scale 3D scene understanding.

The implementation was built using Python-based deep learning frameworks, including PyTorch, enabling flexible experimentation with different model architectures.

For large-scale point cloud understanding, the team leveraged the Pointcept framework, an open-source platform for advanced 3D scene understanding and point cloud segmentation. Pointcept provides implementations of modern transformer-based architectures optimized for large spatial datasets.

Point Transformer V2 point cloud architectures demonstrated the strongest performance due to their ability to capture long-range spatial relationships across complex indoor environments. These models learn architectural patterns directly from data, enabling them to recognize structural components even in noisy, irregular point clouds.

Stage 3: Geometry Reconstruction for BIM-Ready Models

Once the building’s semantic structure is identified, the system converts labeled point clusters into architectural geometry.

Raw point clouds are inherently noisy and irregular, while BIM environments require precise parametric elements. To bridge this gap, we implemented a geometry reconstruction layer that applies geometric fitting algorithms and architectural constraints.

Examples include:

– plane fitting for walls, floors, and ceilings

– detection of openings within wall segments for doors and windows

– primitive fitting for columns and vertical structures

– orthogonalization of intersecting surfaces

These algorithms transform segmented point clusters into clean architectural primitives suitable for BIM modeling.

This stage relies heavily on the Point Cloud Library (PCL) for geometric operations such as plane extraction, clustering, and primitive fitting.

Additional post-processing ensures that the resulting structures adhere to architectural conventions, such as right-angle intersections between walls and consistent wall thickness.

Stage 4: Automating Scan-to-BIM for Digital Twin Creation

The reconstructed geometry is converted into parametric BIM elements and exported in industry-standard formats compatible with digital twin platforms.

The system integrates with industry-standard BIM and CAD tools, including:

– AutoCAD

– Autodesk Revit

– BIM 360

These tools are used to generate structured architectural objects and manage BIM datasets within collaborative design environments.

The generated models include structured building components such as walls, floors, doors, windows, and structural columns. Because the geometry is already organized into BIM primitives, it can be directly integrated into spatial analytics and indoor mapping workflows.

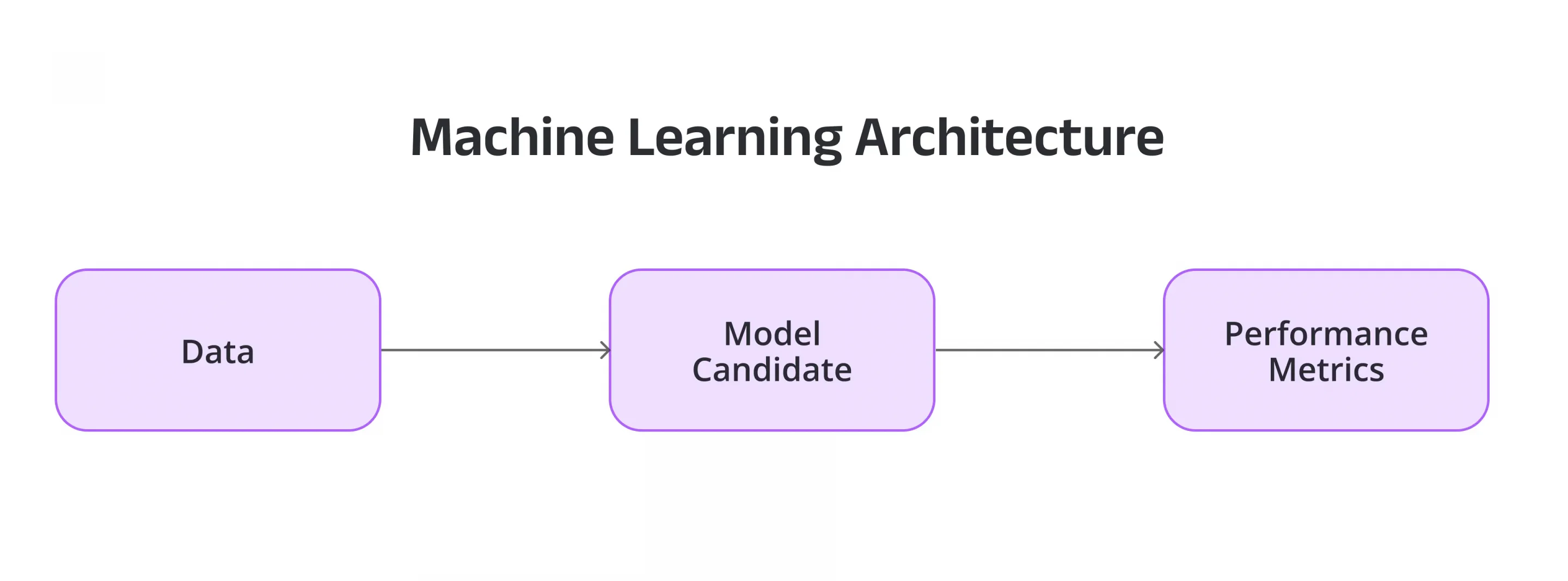

Machine Learning Architecture

During the research phase, we evaluated multiple model families using a standard benchmarking pipeline:

Data –> Model Candidate –> Performance Metrics

The deep learning training pipeline was implemented using PyTorch and TensorFlow, enabling distributed model training on GPU clusters.

Model Performance

The final trained model achieved strong overall performance, maintaining high reliability for automated BIM generation from LiDAR point clouds, with lower accuracy observed mainly in smaller or more complex elements such as doors.

Overall performance metrics included:

– Mean Intersection-over-Union (mIoU): 0.7631

– Mean classification accuracy (mAcc): 0.8827

Per-class performance highlights include:

– Floors: IoU 0.9448

– Walls: IoU 0.8167

– Windows: IoU 0.8311

– Doors: IoU 0.6445

– Structural Columns: IoU 0.6989

These results met the project objective of 70–80% automated BIM element extraction, with the remainder handled through manual verification and refinement.

Deployment Architecture

The solution was deployed as a scalable machine learning pipeline running on cloud infrastructure in AWS and Azure. These environments provided the GPU resources required for model training and inference on large point cloud datasets.

The production system includes:

– Python-based ML inference services

– deep learning models trained with TensorFlow and PyTorch

– point cloud processing modules built with PCL

– geometry reconstruction pipelines

– BIM export modules integrated with AutoCAD, Revit, and BIM 360

This modular architecture allows the client to automatically process new scans as they are generated, transforming raw spatial data into structured digital building models.

Results & Impact

The implementation significantly improved the efficiency of the digital twin production pipeline.

Key outcomes include:

– automated extraction of major architectural elements from point clouds

– reduction of manual BIM modeling effort by approximately 70–80%

– faster turnaround time for building model generation

– scalable processing of large building portfolios

Engineers now begin with machine-generated BIM models that already contain most of the building geometry, allowing them to focus on validation rather than manual reconstruction.

This project demonstrates how advances in 3D deep learning and spatial computing can transform building digitization workflows. By combining semantic scene understanding with geometric reconstruction techniques, the system converts raw LiDAR scans into structured building models with a high level of automation.

The approach enables organizations working with large volumes of spatial data to scale digital twin creation while maintaining architectural accuracy. As LiDAR sensing continues to expand across industries, machine learning systems capable of interpreting spatial environments will play a central role in turning raw spatial data into actionable digital infrastructure.

FAQ

What is point cloud to BIM conversion?

It is the process of taking raw 3D data from a laser scanner (point clouds) and transforming it into a structured Building Information Model (BIM) with parametric objects like walls and windows.

How does LiDAR data become a BIM model?

The data passes through a pipeline of preprocessing, AI-driven semantic segmentation (identifying building components), and geometric reconstruction (fitting shapes to points) before being exported to BIM software.

What role does machine learning play in Scan-to-BIM?

Machine learning automates the "recognition" phase. Instead of a human manually clicking on points to define a wall, the AI automatically classifies millions of points into architectural categories.

How accurate can automated BIM generation be?

Our system achieved a mean Intersection-over-Union (mIoU) of 0.7631, allowing for 70-80% of the model to be generated automatically before minor manual refinements.

Which BIM tools can integrate with this workflow?

The pipeline is compatible with industry standards, including Autodesk Revit, AutoCAD, and BIM 360.

Ready to Scale Your Spatial Data?

- Discuss a similar Scan-to-BIM project with our experts.

- Explore our AI-powered point cloud to BIM conversion solution.

- Learn more about our AI/ML, Data, and DevOps capabilities.